Get the latest

ESG REPORT

Fortified cyber readiness and resilience

Discover a new, validated approach to cyber recovery testing.

SOLUTION BRIEF

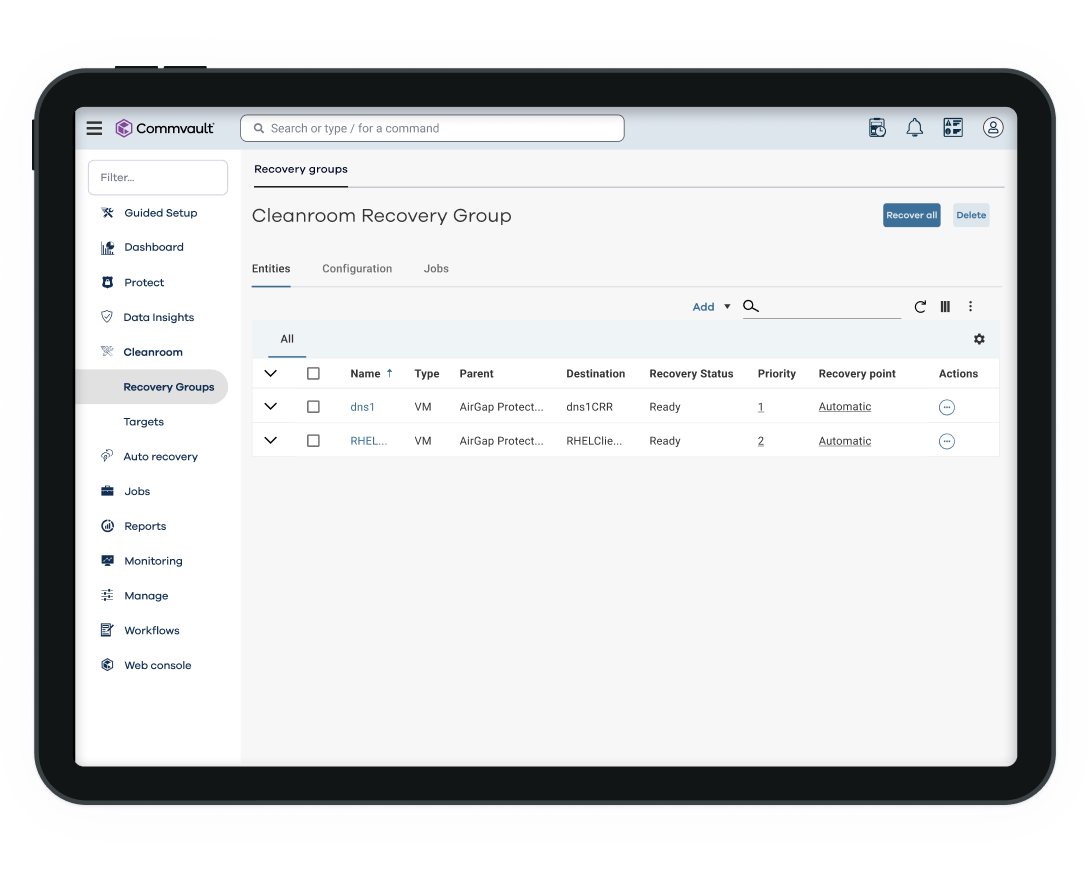

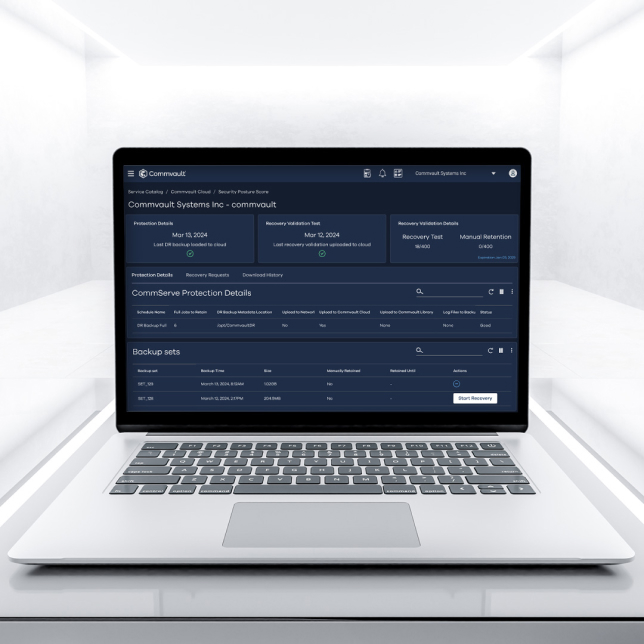

Clean recovery to an on-demand cleanroom

Unlock the ultimate safe haven in a chaotic, hybrid world.

IDC MARKET NOTE

Unlocking cyber resilience with recovery

Strengthen the “identify” and “recover” pillars of NIST framework for innovative security.

The world has changed.

99%

of ransomware tampers with security and backup infrastructure.

66%

of organizations surveyed were breached in 2023.

24 days

is the average reported time to recover from a cyber attack.

Government Accountability Office (GAO)

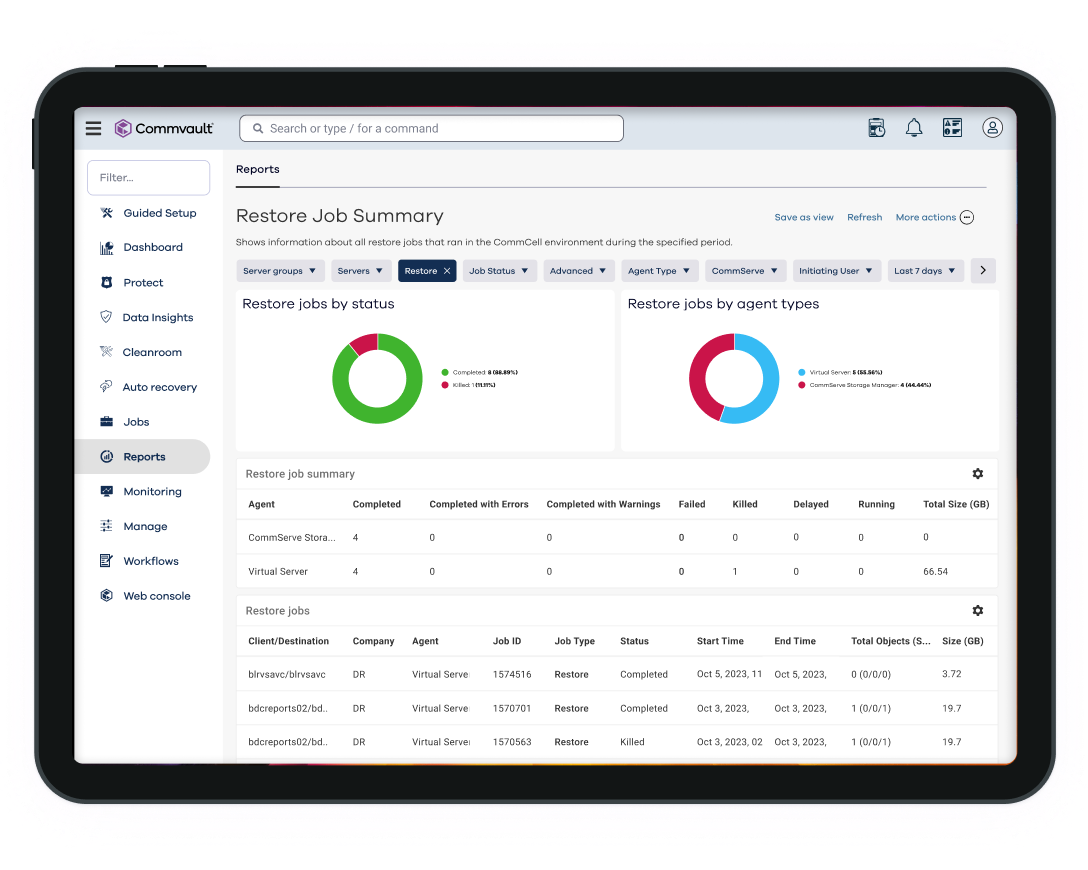

COMMVAULT CLEANROOM RECOVERY

Put your resilience to the test.

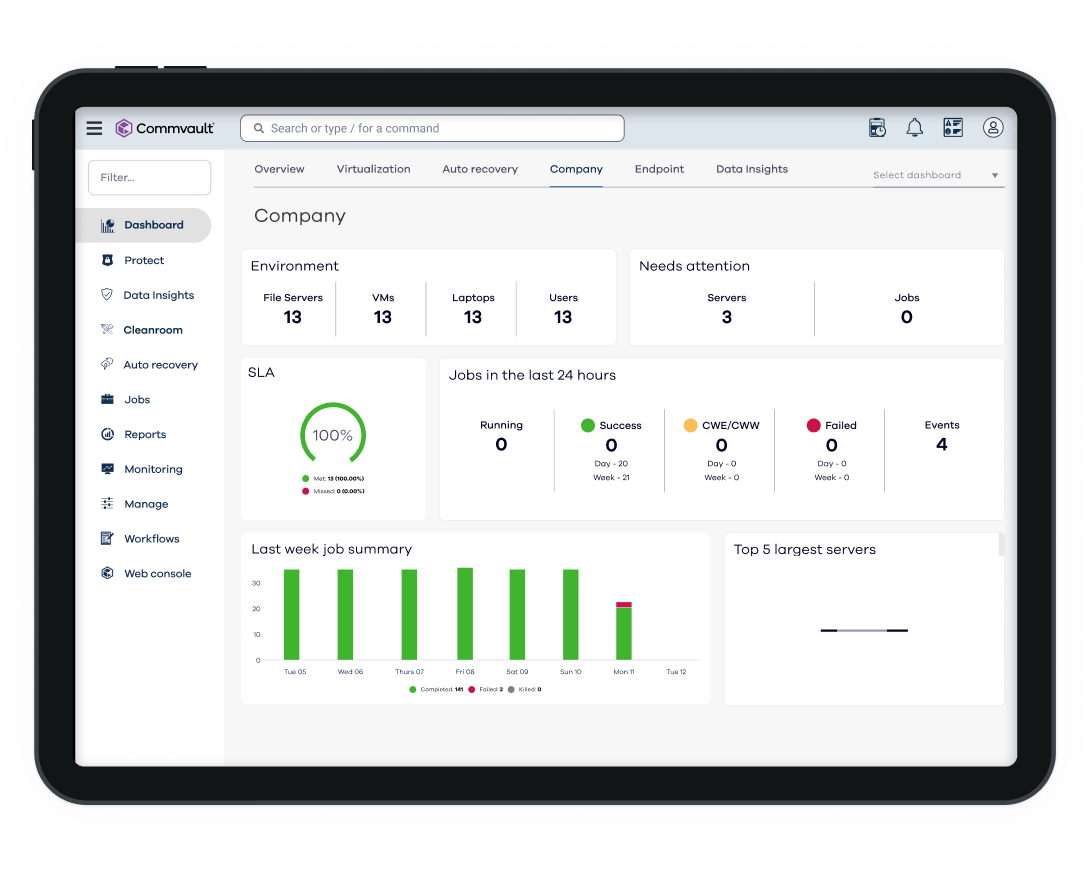

Commvault Cloud Cleanroom Recovery is the only offering that makes reliable cyber recovery testing and readiness possible for any enterprise.

SIMPLE

Readiness within reach, for any organization.

Any enterprise can have cyber recovery readiness and resilience without the cost of dark site locations and complex infrastructure proliferation.

SECURE

Clean recovery. Clean locations. Isolated testing.

Built-in integrations with MSFT Defender for threat scanning and Palo Alto XSOAR for forensics.

Intelligent

AI-enhanced for reliable testing.

Intelligent and automated Cleanpoint™ Validation includes orchestration and post-validation for more efficient and reliable recovery.

REFERENCES

Everyone is talking about Cleanroom Recovery

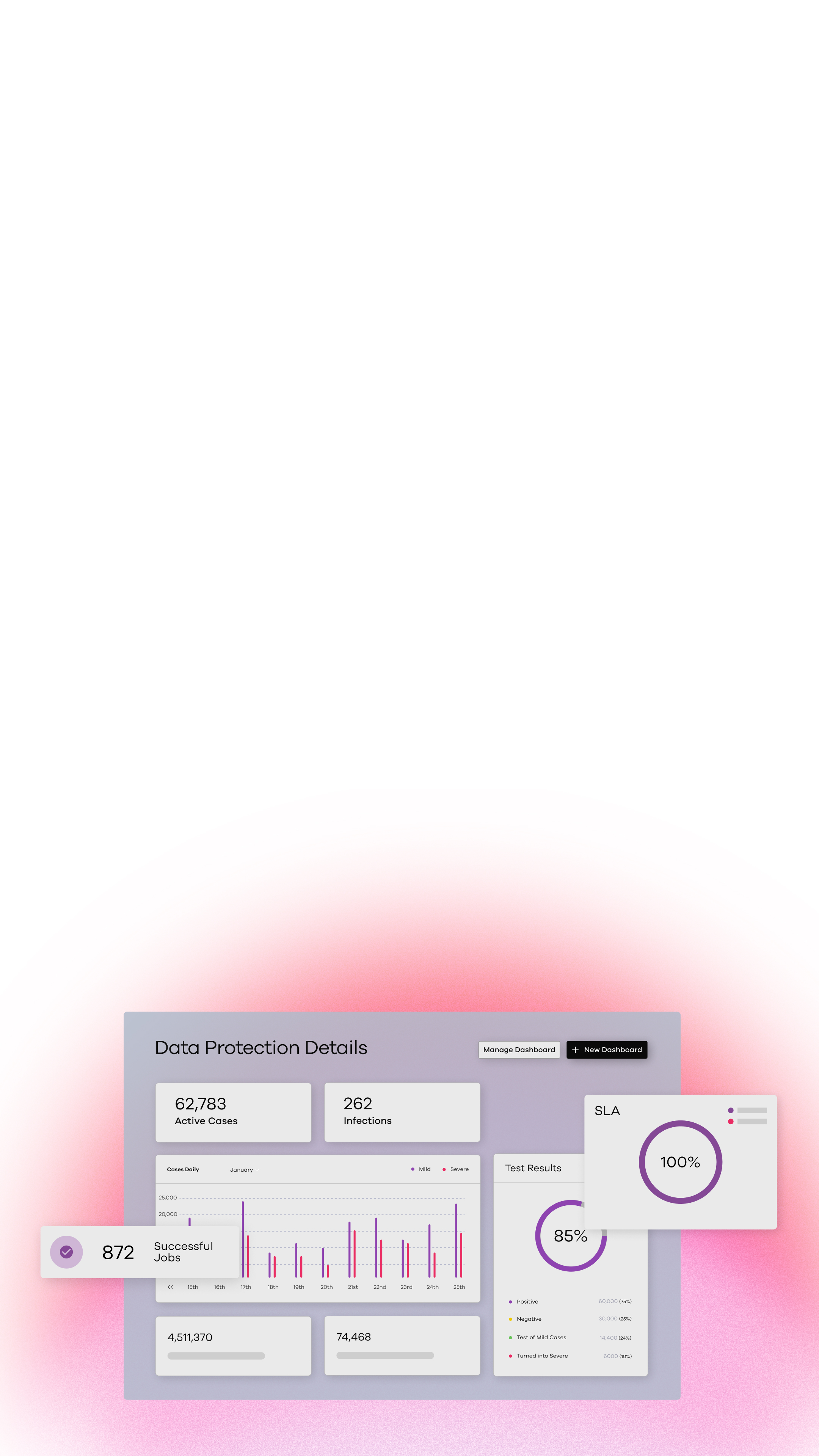

Your Platform for Total Cyber Resilience

We believe our platform is the first to offer true cloud cyber resiliency, built for AI, hybrid, chaos, and scale.

our reach

Supporting more than 100,000 companies

STRATEGIC PARTNERS & INTEGRATIONS

Unlock the full potential of true cyber resilience

Perform a clean and easy restore in a new uncontaminated Microsoft Azure tenant.

Provides advanced security measures, data intelligence, and threat detection capabilities.

Reduce incident response time with automated orchestration and combined insights.

Ready to take the next step?

Experience the platform that delivers true cloud cyber resilience